Vasco Grilo🔸

Bio

Participation4

I am a generalist quantitative researcher. I am open to volunteering and paid work. I welcome suggestions for posts. You can give me feedback here (anonymously or not).

How others can help me

I am open to volunteering and paid work (I usually ask for 20 $/h). I welcome suggestions for posts. You can give me feedback here (anonymously or not).

How I can help others

I can help with career advice, prioritisation, and quantitative analyses.

Posts 238

Comments3014

Topic contributions40

Hi Bob and Geoffrey.

- Yes, what we do is very unusual outside of academia—and inside it too. Re: other groups that do global priorities research, the most prominent ones are GPI, PWI, and the cause prio teams at OP.

GPI refers to the Global Priorities Institute, which has meanwhile closed down, PWI to the Population Wellbeing Initiative, and OP to Open Philanthropy, which has meanwhile rebranded to Coefficient Giving.

Thanks for the post, Huw.

He provides some evidence that satisfaction doesn’t correlate with momentary wellbeing. However, the WHR’s own data finds a highly statistically significant correlation between positive affect and life satisfaction on the national level (Tables 8–10; FWIW it’s likely that they don’t find the same for negative affect because linear models don’t find additional explanatory power in intercorrelated variables).

I think Yasha is particularly concerned about comparisons across countries.

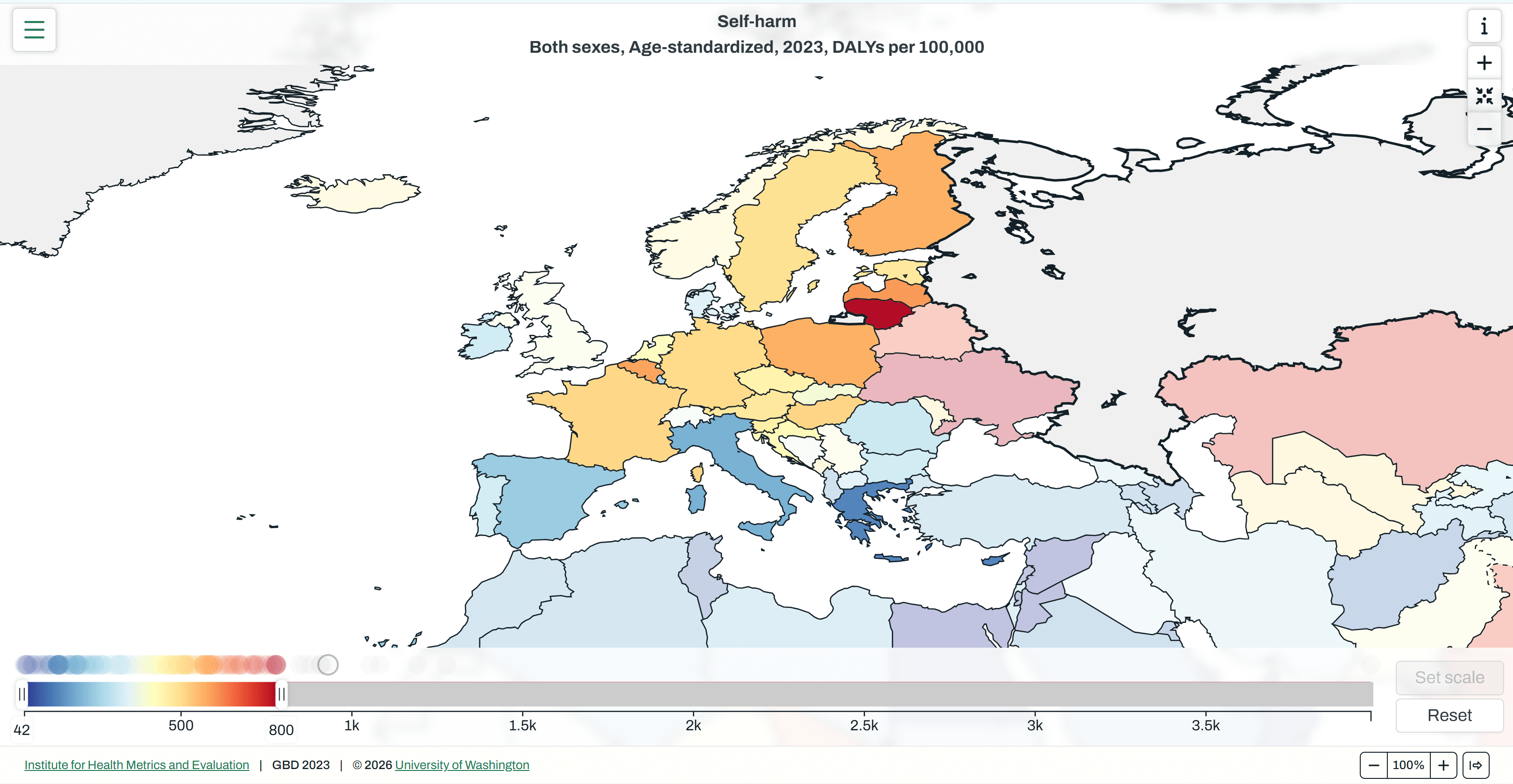

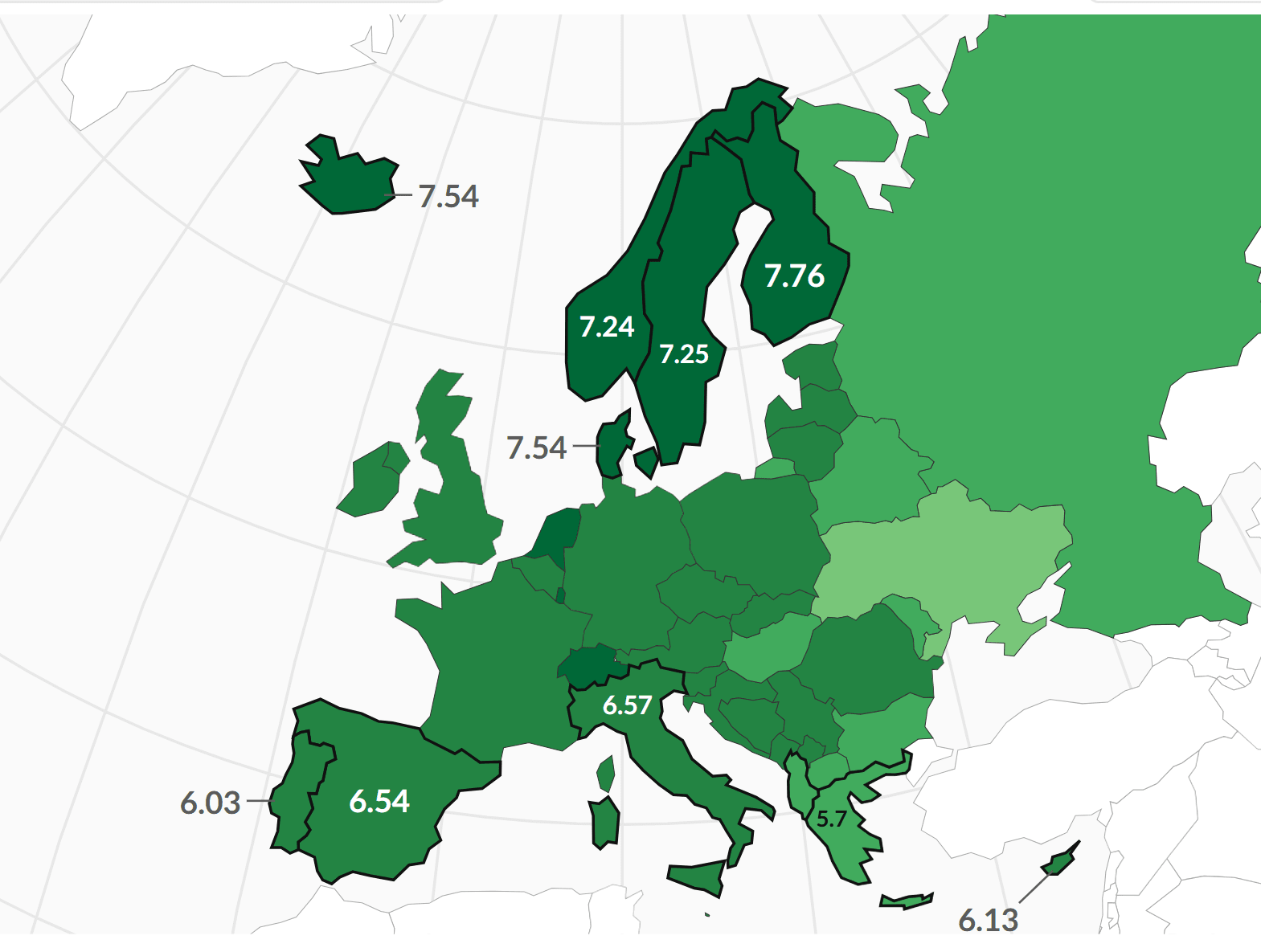

But perhaps the biggest problem with the World Happiness Report is that metrics of self-reported life satisfaction don’t seem to correlate particularly well with other kinds of things we clearly care about when we talk about happiness. At a minimum, you would expect the happiest countries in the world to have some of the lowest incidences of adverse mental health outcomes. But it turns out that the residents of the same Scandinavian countries that the press dutifully celebrates for their supposed happiness are especially likely to take antidepressants or even to commit suicide. While Finland and Sweden consistently rank at the top of the happiness league table, for example, both countries have also persistently experienced some of the highest suicide rates in the European Union, ranking in the top five EU countries according to one recent statistic.

Southern European countries have the lowest age-standardised disease burden per capita from self-harm in the Europe, but not the highest life satisfaction.

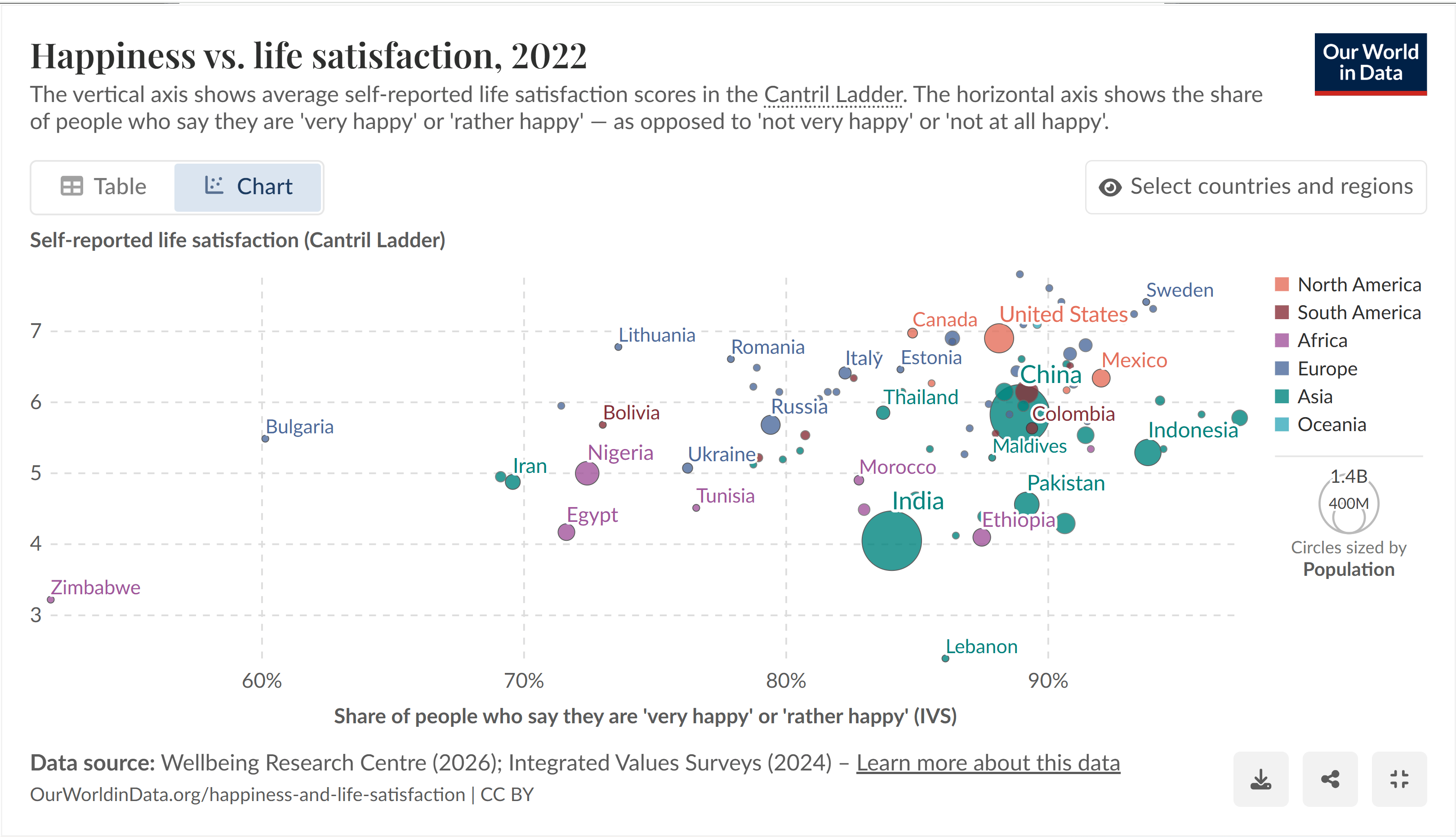

There correlation between life satisfaction and happiness across countries is positive, but very weak.

Thanks for the comment, Michael. I read the post and Seth's original essay, and listened to the episode of The 80,000 Hours Podcast with Seth. I would agree the title of the post is a bit of a misnomer. I think one may update towards a lower chance of digital systems being conscious as a result of Seth's arguments, but they are far from conclusive. I only know I am conscious right now (and I am very confident I was conscious moments ago). So I think a system which is more similar to me at a fundamental physical level should have a higher chance of being conscious. However, I have no idea about what this implies in terms of concrete probabilities of consciousness. As far as I can tell, the available evidence is compatible with frontier large language models (LLMs) having a probability of consciousness of 10^-6, but also 99.999 %.

Hi Daniel and titotal. Thanks for the discussion.

I only know I am conscious right now (and I am very confident I was conscious moments ago). So I think a system which is more similar to me at a fundamental physical level should have a higher chance of being conscious. I have no idea about what this implies in terms of concrete probabilities of consciousness. As far as I can tell, the available evidence is compatible with frontier large language models (LLMs) having a probability of consciousness of 10^-6, but also 99.999 %.

As a side note, I would take for granted that all animals and digital systems are sentient, and focus on assessing the distribution of the intensity of subjective experiences. I think asking about the probability of sentience of an animal or digital system shares some of the issues of asking about the probability that an object is hot. People have different concepts about what "hot" means, and they do not depend just on temperature (for example, the minimum temperature for hot wood is higher than the minimum temperature for hot metal because this transfers heat more efficiently). I understand sentience as having subjective experiences whose intensity is not exactly 0. However, I suspect some people understand it as having subjective experiences which are sufficiently intense. Different bars for this will lead to different probabilities. Asking about the distribution of the intensity of subjective experiences mitigates this. For example, one could ask about the probability of the mean intensity of what an LLM experienced writing a message exceeding the mean intensity of human experiences. It still seems super hard to get numbers for this, but what they refer to may be more concrete than a vague concept like sentience.

I do not see how philosophical zombies (p-zombies) could be physically possible. If they were just like humans at a fundamental physical level, they would in fact be humans. So they would be as conscious as humans, which I assume are conscious (because I am a conscious human right now, and other humans do not seem relevantly different).

Hi Cynthia. Thanks for the clarifying comment.

Relatedly, I wonder how much welfare varies within production systems. For example, I am interested in knowing which of the following results in a greater increase in welfare. Layers going from:

- A. Median furnished cages in the European Union (EU) to median cage-free aviaries in the EU. By median furnished cages in the EU, I mean ones with higher welfare per chicken-year than 50 % of the furnished cages in the EU.

- B. 10th percentile furnished cages in the EU to 90th percentile furnished cages in the EU.

Do you have sense of how these compare? The question reminds me of your meta-analysis of hen mortality in different indoor housing systems. Median cage-free aviaries most likely have higher welfare than median furnished cages, and 90th percentile cage-free aviaries most likely have higher welfare than 90th percentile furnished cages. However, it might still be worth advocating for better management of animals within each system. It might be cheaper than moving to a better system, and capture a significant fraction of its benefits. Likewise, I wonder whether it may sometimes be worth advocating for replacing battery cages with furnished cages instead of cage-free aviaries, or for banning battery cages instead of all cages.

Relatedly, I liked the article The Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness by Alexander Lerchner, and the reply to it by Shelly Albaum.