Daniel_Friedrich

Bio

Participation4

Exploring consciousness, AI alignment, moral psychology and how they interact in decision-making.

Always happy to chat!

Posts 11

Comments50

Disintermediated by a computer to replace biases introduced by cuteness and non-verbal expressiveness with biases introduced by symbolic manipulation, humans would without exception rate a pocket calculator or Eliza- style toy script as more likely to be conscious than a dog or a two year old child. I don't think anyone sincerely believes this to actually be the case.

I'm not sure what you're imagining here. If you give people a trolley problem (only via text) and say on one track, there's a dog and on the other one, there's a computer program Eliza and they can chat to either, most would choose to save the dog, even if its only text output were "whoof whoof".

If you're imagining the thought experiment would somehow block them to make the inference that one entity is an actual dog and the other a program, then yes: I agree with the point that language increases empathy but I'd say the magnitude is much smaller than "non-verbal cues". If you had a Trolley dilemma with one blank track and one track with either a dog or Eliza, I think 90% would pay $0 to save Eliza but a often a lot of money to save the dog.

Unless we're positing dualism, what we perceive at consciousness is an emergent property of complex chemical processes rooted in our biology (and the imperatives of our biology to survive and self replicate.

Most non-dualists would say consciousness is a feature of information processing (functionalists, illusionists, non-reductive materialists) or something as fundamental as physics (Russelian monism, pan(proto)psychism). The particular emergentist and biological theory that is rooted in the instinct to self-replicate and survive is something I'd expect 0.1-7% of philosophers of mind to endorse. But whatever the actual percentages, I definitely disagree dualism and this theory are the only options. The phrase "rooted in [biochemical processes]" is the least controversial but it still connotes something most might not endorse - i.e. that biology and chemistry is the correct category or level of description (Axis 3 in this taxonomy).

I endorse the temperature approach. I'm not sure illusionists would accept the question "What's the % probability that an entity is conscious?" as meaningful but maybe a similar question could indeed be universally accepted, like "Compared to your pain intensity 1 (being poked by a needle), what's your central estimate for the intensity of suffering experienced in scenario X?"

Just to clarify, my argument didn't concern classical p-zombies but what I call "honest p-zombies" - intelligent humanoid entities capable of metacognition but without any intuition similar to our phenomenal intuitions.

Asking whether a process is "close enough [to the brain] to produce the same effect" implicitly begs the question - i.e. assumes consciousness is biological.

P-zombies who wouldn't describe their sensations in terms like "qualia" would likely have an evolutionary fit that's equal to humans. I don't know if they're possible, but I think it demonstrates evolution wasn't optimizing for consciousness. Therefore, we shouldn't ask "is such system sufficiently close to the brain" but "is it sufficiently close to the processes that happen to make brain (phenomenally) conscious".

In general, there isn't agreement about any correlate of consciousness within philosophy of mind - there are well regarded thinkers who claim it's not real (Frankish) or that it's the basic substance of the universe (Goff). I think it's possible consciousness is similar to, say, intelligence or humor, which means you need a complex system to meaningfully implement it. However, I think it's unlikely that "complexity itself" is what gives rise to consciousness, e.g. sunspots are very complex (~unpredictable interaction of many elements).

I'm not convinced by Anil Seth's narrative about our biases in mind attribution.

I've been to his talk where he summarized these points. He talked about our inherent tendency to emotionally relate to entities that can use language. Later, he presented a picture of a transistor and a picture of a monkey and asked which seems more conscious on priors.

The prime mechanism by which human decide whether an entity is valuable and conscious is empathy. We are evolved to feel empathy - that is, modelling "what it is like to be them" - towards entities that have faces, limbs, fur and a squishy body. We feel a lot of empathy for pets and babies - entities that don't control language. And we feel zero empathy for the Chinese room.

The argument relies a lot on trying to depict computers as something rigid, cold and dead and life as something interesting, warm and energetic. This works well for our empathy module but does not convince me as a philosophical argument.

I'm curious whether there's any definition of brain's processes as "non-algorithmic" that doesn't end up in Russellian monism (which I'm inclined to support but suspect Seth isn't). Aren't the laws of physics themselves an algorithm? I see autopoiesis as the most interesting connection between consciousness and life but precisely when you find a clear conceptualization like this, it becomes unclear

- why it couldn't be implemented digitally - e.g. aren't LLMs autopoietic systems, where each token determines the next one?

- what predictions it makes about the variation in human consciousness (in terms of modalities, intensity and reportability)? E.g. if consciousness is dependent on the degree of embodiment, does it predict Stephen Hawking had a low intensity of consciousness? Is the variance found in human consciousness better explained by the computational differences or differences in the mentioned random biological interactions?

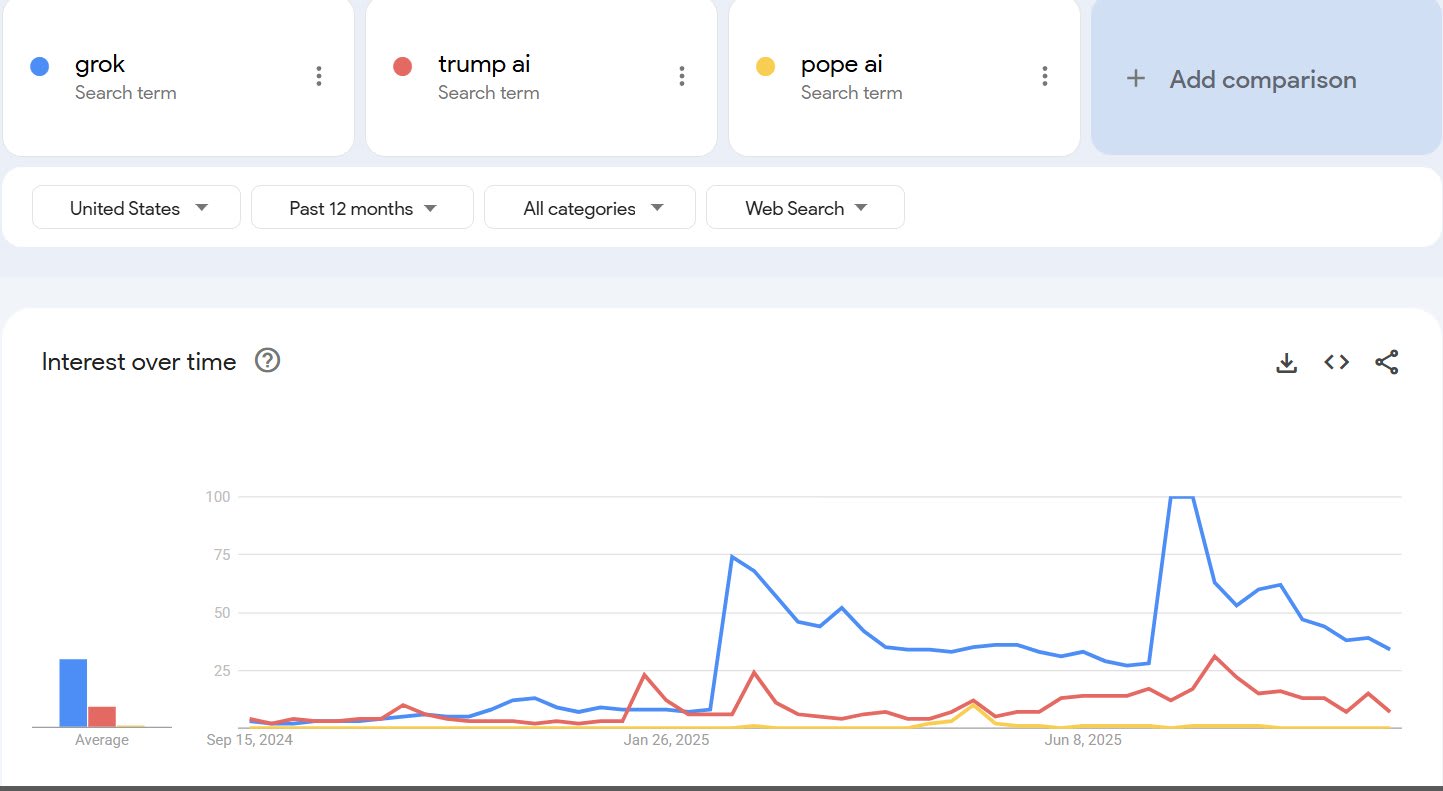

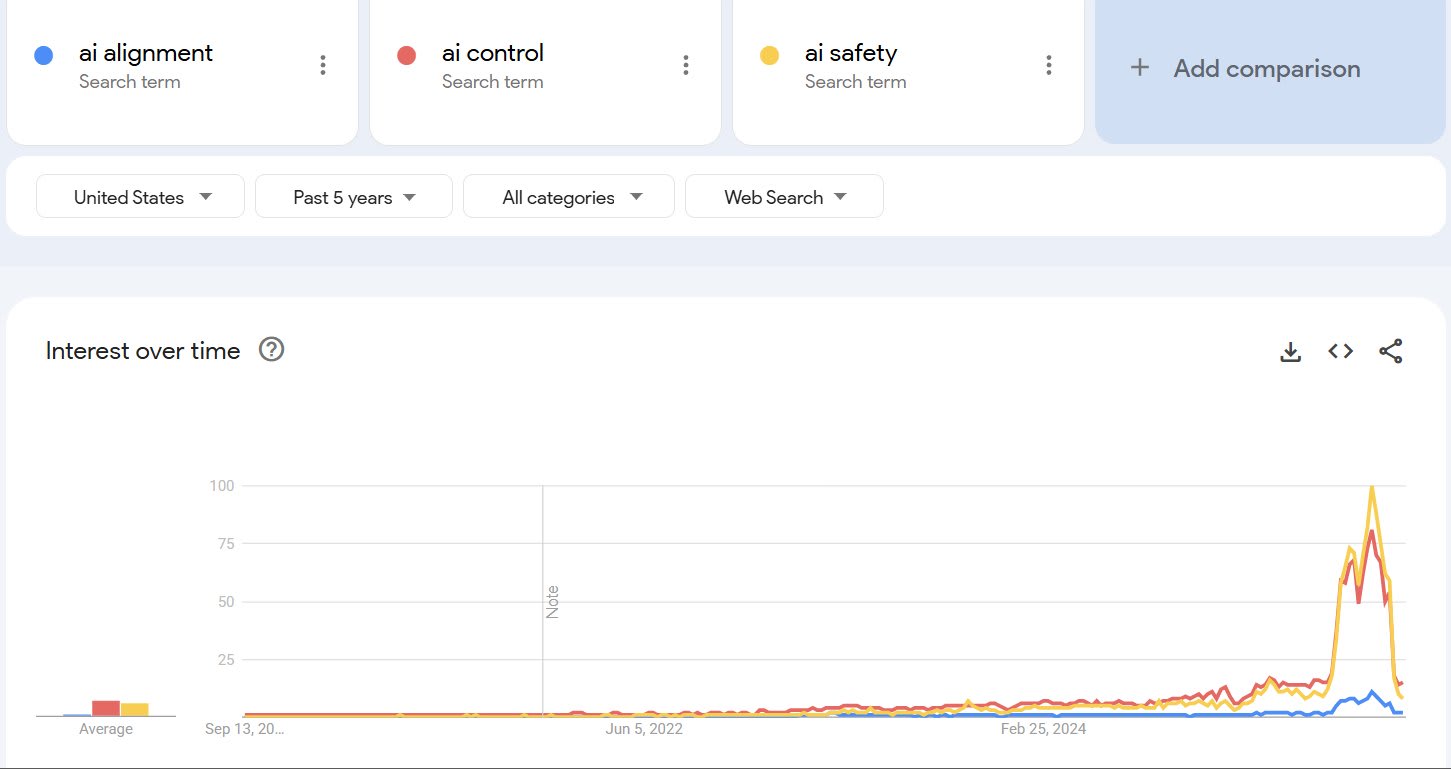

We have seen an order-of-magnitude increase in the interest in AI alignment, according to Google Trends. Part of it (July peak) can be attributed to Grok's behavior (see my little analysis). The YouTube channel AI in Context correctly identified this opportunity and swiftly released a viral video explaining how the incident connects to alignment. September peak might be attributed to the release of If Anyone Builds It.

Fortunately, the WWOTF link still works: https://whatweowethefuture.com/wp-content/uploads/2023/06/Climate-Change-Longtermism.pdf

Alternatively, it loads a little faster on Web Archive: https://web.archive.org/web/20250426191314/https://whatweowethefuture.com/wp-content/uploads/2023/06/Climate-Change-Longtermism.pdf

I disagree with your argumentation but agree there's quite a significant (e.g. 6.5%) chance that you're correct about the thesis that consciousness has causal efficacy through quantum indeterminacy and that this might be helpful for alignment.

However, my take is that if the effects were very significant and similarly straightforward, they would be scientifically detectable even with very simple fun experiments like the "global consciousness project". It's hard to imagine "selection" among possibly infinite universes and planets and billions of years - but if you manage to do so, the "coincidences" that brought about life can be easily explained with the anthropic principle.

I see this as a more general lesson: People are often overconfident about a theory because they can't imagine an alternative. When it comes to consciousness, the whole debate comes down to to what extent something that seems impossible to imagine is a failure of imagination vs failure of a theory. Personally, I myself give most weight to Rusellian monism but I definitely recommend letting some room for reductionism, especially if you don't see how anyone could possibly believe that, as that was the case for me, before I deeply engaged with the reductionist literature.

But I'm glad whenever people aren't afraid to be public about weird ideas - someone should be trying this and I'm really curious whether e.g. Nirvanic AI finds anything.

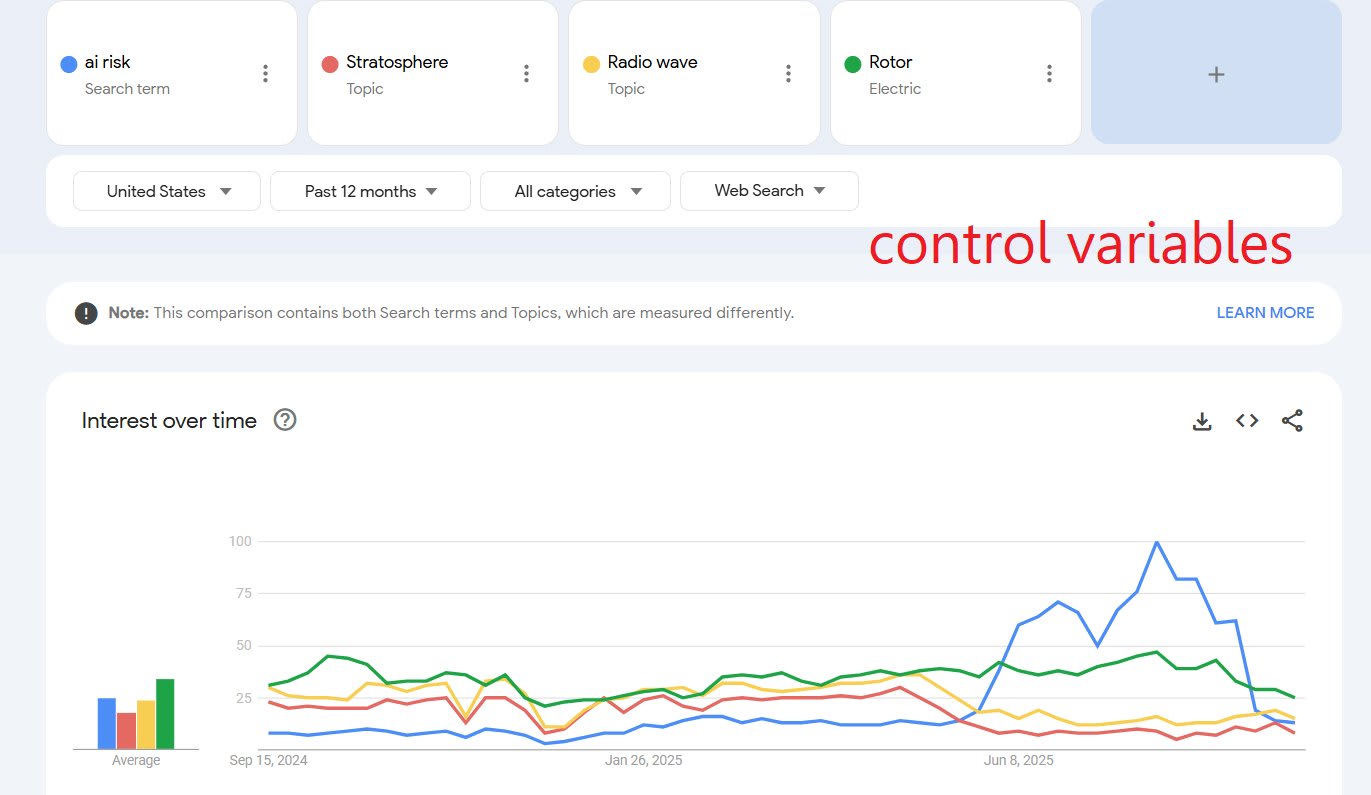

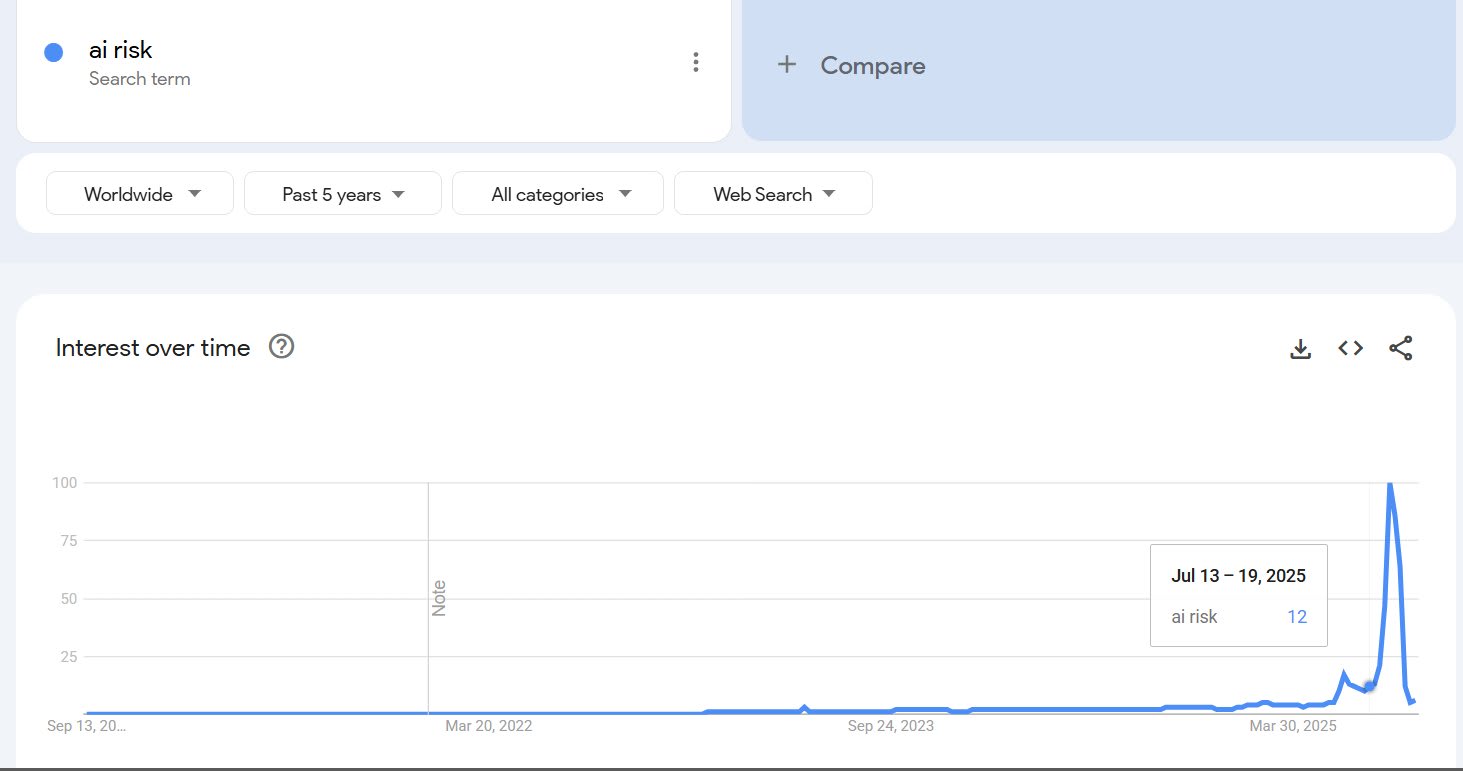

The MechaHitler incident seems to have worked as something of a warning shot, Google interest in AI risk has reached an absolute all time high. Trump's AI plan came out on the same day but the comparisons suggests Grok accounts for ~70% of the peak.

I can't quite dismiss the possibility that the interest was driven by new Chinese AI norms, because Chinese people have to use VPNs, so the geography isn't trustworthy. However, if this were true, I would expect that the number of searches for AI risk in Chinese on Google would be higher than roughly zero (link).

Thanks for clarifying - sorry it might sound like I was twisting your words - I was trying to think through multiple versions of the experiment you propose.

The amount to which we attribute/misattribute consciousness to different entities depends on the correct theory, so it is very uncertain at this point. But I would endorse this broader research program of systematically decoding which of our intuitions about consciousness are biases and which are valid measurements of brain data.

One reason why I thought about Trolley problems was that they show not only % of people who have an abstract belief about consciousness but also the degree / intensity of its perceived experiences. I'm surprised to see a significant fraction of people (1, 2) say current AI is conscious, although a poll about a personal sacrifice like this one (in a less narrow Twitter bubble) might be more relevant to assess how serious they are - and might better model the kind of moral error that we're more likely to make during the AGI transformation.

Regarding "biochemical processes" - the phrasing matters a lot here. Searle, who came up with The Chinese Room, concludes this thought experiment by suggesting thinking requires the specific biochemistry that brains use just like lactation or photosynthesis are defined by specific molecules, rather than algorithms. This formulation is specifically chosen in contrast to functionalist/computationalist views which are mainstream nowadays.